| POD parameters : | OpenStack Group-1 | user0 | aio110 | 10.1.64.110 | compute120 | 10.1.64.120 | [email protected] |

| User | aioX | computeY | Network & Allocation Pool |

|

user0

ssh : [email protected]

vnc : lab.onecloudinc.com:5900

|

aio110

eth0 : 10.1.64.110

eth1 : 10.1.65.110

eth2 : ext-net

Netmask : 255.255.255.0

Gateway : 10.1.64.1

|

compute120

eth0 : 10.1.64.120

eth1 : 10.1.65.120

eth2 : ext-

net Netmask : 255.255.255.0

|

Float Range : 10.1.65.00 – 10.1.65.00

Network : 10.1.65.0/24

Gateway : 10.1.65.1

DNS : 10.1.1.92

|

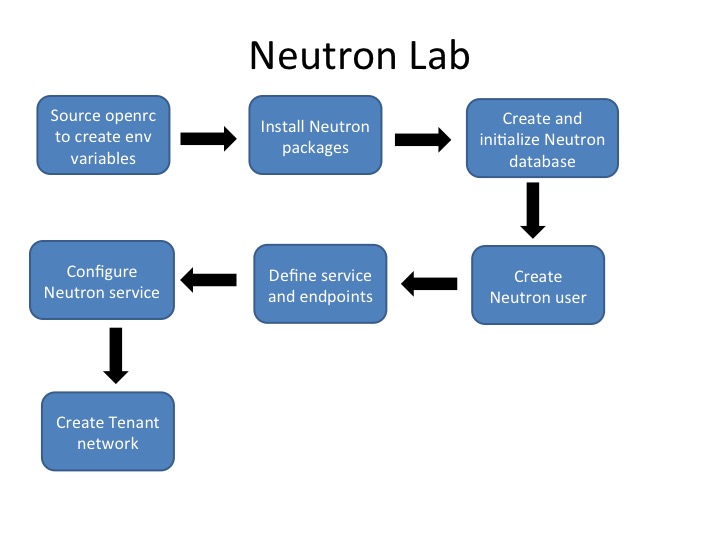

In this lab we will install the OpenStack Network Service (Neutron). Like its predecessor, nova-networking, Neutron manages the network interactions for your OpenStack installation. However, unlike nova-networking, you can configure Neutron for advanced virtual network topologies, such as per-tenant private networks and much, much more through additional embedded capabilities and pluggable services.

This lab will leverage the Meta-Layer 2 Plugin to enable a simple GRE tunnel overlay network which will be used to create per tenant private networks. Our configuration will also include an “external” network, a special name in the Neutron space that allows us to also create a NAT (Network Address Translation) connectivity model across a software router (in our case an IPtables router on the AIO node). We will “overload” a physical port on our network (eth1 in this case) so that our VMs can get “out”, and so that we may get “in” to them via a path other than the VNC service we set up in the Nova Lab.

Once we have completed the basic Neutron installation, we are also finally at a point where we can start a Virtual Machine in our “Cloud” for the first time.

We will use the CLI interface to boot our first “Cloud Instance” and will associate an address to it in order to allow us to ssh into the instance.

The next Lab will have us configure a second Nova-Compute node, so that our network can span across an external physical environment, which is where the tunneling “SDN-like” capabilities will become useful. We will also prove that communications continues to operate properly in this newly connected world.

Neutron Installation

Step 1: As with the previous labs, you will need to SSH the aio node.

If you have logged out, SSH into your AIO node:

ssh centos@aio110If asked, the user password (as with the sudo password) would be centos, then become root via the sudo password:

sudo su -Then we’ll source the OpenStack administrative user credentials. As you’ll recall from the previous lab, this sets a set of environment variables (OS_USERNAME, etc.) that are then picked up by the command line tools (like the keystone and glance tools we’ll be using in this lab) so that we don’t have to pass the equivalent –os-username command line variables for each command we run:

source ~/openrc.shStep 2: Install the packaged Neutron services.

yum install openstack-neutron openstack-neutron-ml2 python-neutronclient -yAfter executing the above command, you have installed:

- openstack-neutron: exposes the Neutron API, and passes all web-service calls to the Neutron plugin for processing.

- openstack-neutron-ml2: The Modular Layer 2 (ml2) plugin is a framework allowing OpenStack Networking to simultaneously utilize the variety of layer 2 networking technologies which currently works with the existing OpenVSwitch, LinuxBridge, and hyperv L2 agents, and is intended to replace and deprecate the monolithic plugins associated with those L2 agents.

- python-neutronclient: The python based CLI tools for communicating with the Neutron API(s)

Note: While it is possible to use OpenStack without a virtual switching layer, we are going to lever the most common virtual switch in production in OpenStack environments, so we’ll install the Neutron package for the Open Virtual Switch (OVS), which will also install the ovs switch as well.

yum install openstack-neutron-openvswitch -yNow you have just installed:

- openstack-neutron-openvswitch: Plugin agent for the Open vSwitch as well as the Open vSwitch.

Database Creation

Step 3: Create neutron database and add the neutron user access credentials (guess which password? Yep: pass):

mysql -u root -ppass

CREATE DATABASE neutron;

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY 'pass';

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY 'pass';

exitStep 4: Create User Neutron. Again we’ll use pass as the password.

openstack user create neutron --password pass --email [email protected]Example output:

+----------+----------------------------------+

| Property | Value |

+----------+----------------------------------+

| email | [email protected] |

| enabled | True |

| id | 65ccba8b996d4cd5bdee830408ca8f3b |

| name | neutron |

| username | neutron |

+----------+----------------------------------+We’re also going to map the neutron user to the service tenant with the admin role as we’ve done for the other services:

openstack role add --project service --user neutron adminStep 5: Define services and service endpoints.

This should start to look very familiar. We’re now going to update the Keystone catalog both with a service definition for the network type with the name neutron:

openstack service create --name neutron --description "OpenStack Networking Service" networkExample output:

+-------------+----------------------------------+

| Property | Value |

+-------------+----------------------------------+

| description | OpenStack Networking Service |

| enabled | True |

| id | 389a29e4862f4d879e52c1a407cc7d1a |

| name | neutron |

| type | network |

+-------------+----------------------------------+And we’ll then add the service endpoint to the catalog as well:

openstack endpoint create --publicurl http://aio110:9696 --internalurl http://aio110:9696 --adminurl http://aio110:9696 --region RegionOne networkExample output:

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| adminurl | http://aio132:9696 |

| id | 74e67fb97a43465681781685d747ed40 |

| internalurl | http://aio132:9696 |

| publicurl | http://aio132:9696 |

| region | RegionOne |

| service_id | 8112b1263ce647bda5e50460f115c342 |

| service_name | neutron |

| service_type | network |

+--------------+----------------------------------+Step 6: Modify the AIO node Kernel to allow IPv4 traffic forwarding (e.g. Routing). Since our AIO node is taking on the role of our network services node, and because we want to leverage the basic routing functions enabled via OpenStack, we’re going to configure the Kernel to allow this (ip_forward).

We’re also going to enable “Reverse Path Filtering”, which will ensure that we’re not leaking packets that the “reverse path” that a packet takes matches interfaces, so that we’re not randomly leaking packets between networks (rp_filter). This is even more important given the use of “VRF” equivalent namespaces for our routing functions. These parameters are set in the /etc/sysctl.conf file, and we can just add these parameters to the end of the file as follows:

cat >> /etc/sysctl.conf <<EOF

net.ipv4.ip_forward=1

net.ipv4.conf.all.rp_filter=0

net.ipv4.conf.default.rp_filter=0

EOF

Run the following command to load the changes you just made. This will also return the above values saved for confirmation:

sysctl -pConfigure Neutron Service

Step 7: While we didn’t install the large number of separate packages that we installed with Nova, we still have a lot of configuration to do. Certainly there’s the initial defaults (database, message queue, and keystone), and there are the connections to other services (nova in this case), but we’re also going to configure ML2, a set of specific services (things like Routing are “extra” on top of the base Neutron), and there are also some components that are very “networky” like IPAM that we’ve got some configuration needs for.

Let’s take them one at a time:

First, let’s get our Keystone Configuration set:

openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_uri http://aio110:5000/v2.0

openstack-config --set /etc/neutron/neutron.conf keystone_authtoken identity_uri http://aio110:35357

openstack-config --set /etc/neutron/neutron.conf keystone_authtoken admin_tenant_name service

openstack-config --set /etc/neutron/neutron.conf keystone_authtoken admin_user neutron

openstack-config --set /etc/neutron/neutron.conf keystone_authtoken admin_password pass

And we also have to tell the system that we’re using Keystone for authentication:.

openstack-config --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystone

Next we’ll add the RabbitMQ parameters, not much to tell the system, as most of it is done by the applications connecting to the server, but “here’s how to connect”:

openstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbit

openstack-config --set /etc/neutron/neutron.conf DEFAULT rabbit_host aio110

openstack-config --set /etc/neutron/neutron.conf DEFAULT rabbit_userid test

openstack-config --set /etc/neutron/neutron.conf DEFAULT rabbit_password test

And the same is effectively true for the database. We’ll migrate to the current configuration later, and this is just ensuring that the resources can access the database with the credentials we set earlier in this lab:

openstack-config --set /etc/neutron/neutron.conf database connection mysql://neutron:pass@aio110/neutron

The next three configuration elements:

openstack-config --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2

openstack-config --set /etc/neutron/neutron.conf DEFAULT service_plugins router

openstack-config --set /etc/neutron/neutron.conf DEFAULT allow_overlapping_ips True

Next we establish our connetion parameters to Nova, along with some of the reasons that we’d want to notify Nova (port state changes specifically):

openstack-config --set /etc/neutron/neutron.conf DEFAULT nova_admin_password pass

openstack-config --set /etc/neutron/neutron.conf DEFAULT nova_admin_auth_url http://aio110:35357/v2.0

openstack-config --set /etc/neutron/neutron.conf DEFAULT nova_region_name regionOne

openstack-config --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_status_changes True

openstack-config --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_data_changes True

openstack-config --set /etc/neutron/neutron.conf DEFAULT nova_url http://aio110:8774/v2

openstack-config --set /etc/neutron/neutron.conf DEFAULT nova_admin_username nova

Step 8: Define the Nova Service Tenant

For the configuration steps above, we’ve not really had to find anything unique to this environment, but the actual ID of the nova services tenant is a little different. We have to look it up, as we didn’t explicitly set it ourselves in any previous step. We can do that with the following command:

openstack project show serviceExample output:

+-------------+----------------------------------+

| Property | Value |

+-------------+----------------------------------+

| description | Service Project |

| enabled | True |

| id | 01a81ad205144aafa663da745efdb5d9 |

| name | service |

+-------------+----------------------------------+With that output, we can grab the ID Value (select it and copy it) for our openstack-config command, but since we’re using command line tools so we can leverage an inline lookup instead:

openstack-config --set /etc/neutron/neutron.conf DEFAULT nova_admin_tenant_id `openstack project list | grep service | cut -d " " -f 2`That’s the Neutron Server configuration, now we need to configure all of the other services that support Neutron.

Step 9: DHCP Service Config

We’ll configure the DNSmasq DHCP (and as the name implies DNS) service. DNSmasq is currently the only ‘easy’ option for this service in OpenStack, though there is work to support others, including tools like Cisco Network Registrar, InfoBlox, and others. In our case the DHCP agent will also run on the AIO node though it can be configured to run across multiple compute hosts for some form of scale/availability improvement. The DHPC Agent expects its configuration in /etc/neutron/dhcp_agent.ini, so we’ll again use the openstack-config tool to modify the Key/Value data needed, specifically, the interface driver (how do I talk to a virtual or physical port), the dhcp driver (DNSmasq) and the need to use and manage namespaces (the equivalent of Layer 3 segregation, like a Virtual Routing and Forwarding (VRF) model router ).

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT interface_driver neutron.agent.linux.interface.OVSInterfaceDriver

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_driver neutron.agent.linux.dhcp.Dnsmasq

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT use_namespaces True

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_delete_namespaces TrueStep 10: Configure L3 Agent

We will use the L3_Agent model of L3 Routing. This is an embedded driver in the neutron server that talks (via agent) to an IPtables router. The agent and router are also going to run on our AIO node and we’ll also provide the L3 agent with access information for our OVS switch infrastructure (specifically where is the external bridge) and we’ll enable the same VRF like model that we’ve done for the DHCP agent insertion. L3 agent uses it’s own config file, so we’ll make modifications to it at /etc/neutron/l3_agent.ini:

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT interface_driver neutron.agent.linux.interface.OVSInterfaceDriver

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT use_namespaces True

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT external_network_bridge br-ex

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT router_delete_namespaces TrueStep 11: The ML2 plugin configuration lives in /etc/neutron/plugins/ml2/ml2_conf.ini file and with it we specify things like tenant network types (VLAN, GRE, etc.) and mechanism drivers (OVS, Linux Bridge, Cisco Nexus, etc.) are available either for tenant or provider (aka admin) use. We will configure both flat (no overlay or VLANs) and GRE type drivers, selecting GRE as the default tenant network model. We’ll also enable OpenVSwitch as our L2 mechanism driver (we could add more, like the Nexus or even LinuxBridge drivers).

Each type driver has configuration options: type_flat we’ll define an association that limits flat networks to the “external” network interface, type_gre we’ll define the range of id’s for tunnels (in this case limited to id’s 1 – 1000). We’re including a security group configuration that points the system at using the hybrid OVS model (this is the IPtables model that was discussed in teh lectures, using a per-VM Linux Bridge segment to apply security to), and we need to define the OVS integration points (the external model network should be attached to br-ex, and we’ll enable tunnel connectivity for GRE):

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 type_drivers flat,gre

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 tenant_network_types gre

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 mechanism_drivers openvswitch

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_flat flat_networks external

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_gre tunnel_id_ranges 1:1000

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_security_group True

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_ipset True

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs local_ip 10.1.65.110

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs enable_tunneling True

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs bridge_mappings external:br-ex

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini agent tunnel_types gre

Step 12: Metadata service allows a VM instance to retrieve instance specific data like SSH keys, startup scripts etc. The request is received by the Metadata agent and is relayed to nova. We’ll update the /etc/neutron/metadata_agent.ini file, principally with access information so that the metadata service can query nova (the compute manager) for parameters specific to any incoming metadata requests.

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_url http://aio110:5000/v2.0

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_region regionOne

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT admin_tenant_name service

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT admin_user neutron

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT admin_password pass

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT nova_metadata_ip aio110

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT metadata_proxy_shared_secret passStep 13: Update Nova with network service information.

Now that we’ve completed configuring Neutron, we’ll go back to the Nova configuration to add in the missing but essential Network communications information so that these services can all properly interact!

First we’ll tell Nova that Neutron is it’s Network API, SecurityGroup API, and that the network will be attached via the OVS Interface Driver and will use Neutron for it’s security group functions (even if it’s still nova compute that’s configuring the edge linux-bridge security segment). We’ll configure the other half of the metadata configuration from the section above (matching passwords specifically), and lastly, we’ll ensure that we have credentials with which Nova can talk to Neutron.

openstack-config --set /etc/nova/nova.conf DEFAULT network_api_class nova.network.neutronv2.api.API

openstack-config --set /etc/nova/nova.conf DEFAULT security_group_api neutron

openstack-config --set /etc/nova/nova.conf DEFAULT linuxnet_interface_driver nova.network.linux_net.LinuxOVSInterfaceDriver

openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver

openstack-config --set /etc/nova/nova.conf neutron service_metadata_proxy true

openstack-config --set /etc/nova/nova.conf neutron metadata_proxy_shared_secret pass

openstack-config --set /etc/nova/nova.conf neutron url http://aio110:9696

openstack-config --set /etc/nova/nova.conf neutron auth_strategy keystone

openstack-config --set /etc/nova/nova.conf neutron admin_tenant_name service

openstack-config --set /etc/nova/nova.conf neutron admin_username neutron

openstack-config --set /etc/nova/nova.conf neutron admin_password pass

openstack-config --set /etc/nova/nova.conf neutron admin_auth_url http://aio110:35357/v2.0

Step 14:Populate the database

We’ve finished the configuration stages, so we now have the right credentials to talk to the database. We’ll use that capacity now to migrate the database from “empty” to “current”, getting our tables and schema properly installed. Note that we’re passing both the neutron and ml2 configuration files to the manage tool so that it can pass in data it’s collected out of those configuration files. This is a bit different than most of the other services, mostly because of the huge number of different model/schema components that exist, and different configuration files will trigger different tables to be added/populated in the process.

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutronStep 15: Address so minor initialization script inconsistencies

While the packaged installation from the RDO project is good (that’s where we are getting our installation packages from), there are still some minor glitches, principally around _which_ configuration files should be pointed at for starting services.

The Networking service initialization script (specifically the neutron-server script) expect a symbolic link /etc/neutron/plugin.ini pointing to the ML2 plug-in configuration file, /etc/neutron/plugins/ml2/ml2_conf.ini. If this symbolic link does not exist, create it using the following command:

ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.iniIn addition, the openvswitch-agent startup script expects to be using a plugin.ini file from before ML2 days, and we need to instead point to the plugin.ini file that we just created a link for above. The following sed script will remedy this issue.

sed -i 's,plugins/openvswitch/ovs_neutron_plugin.ini,plugin.ini,g' /usr/lib/systemd/system/neutron-openvswitch-agent.serviceStep 16: Start and configure the OVS components:

First enable and start the service as we’ve done before. We will be modifying OVS configuration, so the switch (and its configuration database, which is the switch controller in this particular case) needs to be up and running.

systemctl enable openvswitch.service

systemctl start openvswitch.service

systemctl status openvswitch.serviceOVS will auto create the br-int integration bridge, and the OpenStack OVS agent is able to create and connect tunnel based networks (br-tun OVS bridge) on demand, but the br-ex OVS bridge that we told Neutron to use earlier doesn’t yet exist, and it will both need to be created, and have it’s physical port mapped to it.

So we’ll do two things:

1) Create interface configuration files so that the interfaces will be recovered after a reboot

2) start the interfaces to create the bridge and associate the physical interface.

The easiest way to create these config files (as they either don’t exist, or aren’t the right configuration) is to just create them from scratch:

cat > /etc/sysconfig/network-scripts/ifcfg-br-ex <<EOF

DEVICE=br-ex

BOOTPROTO=static

ONBOOT=yes

IPADDR=10.1.65.110

PREFIX=24

MTU=1400

TYPE=OVSBridge

OVS_Bridge=br-ex

DEVICETYPE=ovs

EOFAnd for our “external” interface (which we have used to donate the address for our br-ex bridge):(eth1) interface to the external bridge br-ex, which completes the basic configuration of the of the external port bridge to the external physical interface.

cat > /etc/sysconfig/network-scripts/ifcfg-eth1 <<EOF

DEVICE=eth1

ONBOOT=yes

MTU=1400

TYPE=OVSPort

DEVICETYPE=ovs

OVS_BRIDGE=br-ex

EOFIf we now bring up the br-ex and eth1 interfaces, we should find that the OVS br-ex is up, running and has eth1 associated with it:

ifdown br-ex; ifup br-ex; ifdown eth1; ifup eth1ovs-vsctl list-ports br-exExample output:

eth1Step 17: Restart the rest of the services:

Since we modified the Nova configuration, and we’ve already ensured that these services were running, we’ll restart them in order to get them to re-read their configuration:

systemctl restart openstack-nova-compute.service openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.serviceEnable the Neutron service, so that they could survive a reboot.

systemctl enable openvswitch.service neutron-openvswitch-agent.service neutron-l3-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service neutron-server.service

systemctl start openvswitch.service neutron-openvswitch-agent.service neutron-l3-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service neutron-server.service

systemctl status openvswitch.service neutron-openvswitch-agent.service neutron-l3-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service neutron-server.serviceNow check that all the Neutron agents are working

neutron agent-listExample output:

+--------------------------------------+--------------------+--------+-------+----------------+---------------------------+

| id | agent_type | host | alive | admin_state_up | binary |

+--------------------------------------+--------------------+--------+-------+----------------+---------------------------+

| 1126a036-097b-4062-9b3e-a5ff09be86ea | Metadata agent | aio110 | :-) | True | neutron-metadata-agent |

| 2d10bb4d-ee10-4302-8a2a-e3bba01f07c6 | L3 agent | aio110 | :-) | True | neutron-l3-agent |

| 65548614-2814-4259-bf29-d95dfb58d8cd | DHCP agent | aio110 | :-) | True | neutron-dhcp-agent |

| 9bc34a36-cc8b-4c91-bf42-f2b982c42401 | Open vSwitch agent | aio110 | :-) | True | neutron-openvswitch-agent |

+--------------------------------------+--------------------+--------+-------+----------------+---------------------------+Create Network and their Subnets

step 18: External “Floating IP” network

We’ll create an “external” network on which our VMs (if directly attached) would get the equivalent of a public address, or more commonly, which we will associate with a router as a “gateway” port.

And In this case, we’re going to create a network, called public, ensure it has the external tag associated with it, connect it to the physical_network “external” (this is actually in the ML2 file, and needs to be, disproving the above rule), and leverage the “FLAT” network type (no L2 segmentation) to connect the logical port to the physical port.

neutron net-create public --router:external --provider:physical_network external --provider:network_type flat Example output:

+---------------------------+--------------------------------------+

| Field | Value |

+---------------------------+--------------------------------------+

| admin_state_up | True |

| id | d9515d1a-e0f4-4983-bee9-4be247aaa63d |

| mtu | 0 |

| name | public |

| provider:network_type | flat |

| provider:physical_network | external |

| provider:segmentation_id | |

| router:external | True |

| shared | False |

| status | ACTIVE |

| subnets | |

| tenant_id | aeb95f9f730c42848440a6b06eadcce3 |

+---------------------------+--------------------------------------+Now that we have a layer 2 segment, we’ll also want to associate L3 information with it (so that we can get a floating IP address, or so that our L3 agent router can get an external IP address). We’ll configure not only the upstream/next-hop L3 gateway (FLOAT_GW), but we’ll also include a range of addresses (because we’re still sharing the larger network with our peers in this lab), so we’ll just take a small pool of 10 addresses to allocate to our Floating IPs.

Also note that we’re not starting the DHCP service, which dramatically reduces the deployment methods available (specifically we are left with only one way to pass IP information to a tenant; config_drive), but we are only going to be manually configuring the IP address on the external interface, so perhaps this isn’t such an issue after all.

Example:

neutron subnet-create public --name public-subnet --allocation-pool start=FLOAT_IP_START,end=FLOAT_IP_END --disable-dhcp --gateway FLOAT_GW FLOAT_NETWORKneutron subnet-create public 10.1.65.0/24 --name public-subnet --allocation-pool start=10.1.65.00,end=10.1.65.00 --disable-dhcp --gateway 10.1.65.1Example output:

+-------------------+----------------------------------------------+

| Field | Value |

+-------------------+----------------------------------------------+

| allocation_pools | {"start": "10.1.65.50", "end": "10.1.65.53"} |

| cidr | 10.1.65.0/24 |

| dns_nameservers | |

| enable_dhcp | False |

| gateway_ip | 10.1.65.1 |

| host_routes | |

| id | 7cda4ca5-4f57-4c21-97d1-f57b5db00fca |

| ip_version | 4 |

| ipv6_address_mode | |

| ipv6_ra_mode | |

| name | public-subnet |

| network_id | d9515d1a-e0f4-4983-bee9-4be247aaa63d |

| subnetpool_id | |

| tenant_id | aeb95f9f730c42848440a6b06eadcce3 |

+-------------------+----------------------------------------------+Step 19: Create a Tenant network:

We’ll now create a network for our tenants to actually connect VMs to, and in this case, we do want DHCP service enabled, and we’ll also pass a DNS nameserver address, so that our VMs can get ahold of upstream service (e.g. you can then ping google.com rather than ping 74.125.224.9).

neutron net-create private-netExample output:

+---------------------------+--------------------------------------+

| Field | Value |

+---------------------------+--------------------------------------+

| admin_state_up | True |

| id | bf39e612-f20f-49e6-83df-12d8bad52c0e |

| mtu | 0 |

| name | private-net |

| provider:network_type | gre |

| provider:physical_network | |

| provider:segmentation_id | 66 |

| router:external | False |

| shared | False |

| status | ACTIVE |

| subnets | |

| tenant_id | aeb95f9f730c42848440a6b06eadcce3 |

+---------------------------+--------------------------------------+We also need to create a new Subnet for the Tenant network, so that the DHCP server has address to allocate and deploy to the VMs being created.

neutron subnet-create --name private-subnet --dns-nameserver 10.1.1.92 private-net 10.10.10.0/24Example output:

+-------------------+------------------------------------------------+

| Field | Value |

+-------------------+------------------------------------------------+

| allocation_pools | {"start": "10.10.10.2", "end": "10.10.10.254"} |

| cidr | 10.10.10.0/24 |

| dns_nameservers | 10.1.1.92 |

| enable_dhcp | True |

| gateway_ip | 10.10.10.1 |

| host_routes | |

| id | 944bc913-23f3-4789-983f-8d3f07849893 |

| ip_version | 4 |

| ipv6_address_mode | |

| ipv6_ra_mode | |

| name | private-subnet |

| network_id | bf39e612-f20f-49e6-83df-12d8bad52c0e |

| subnetpool_id | |

| tenant_id | aeb95f9f730c42848440a6b06eadcce3 |

+-------------------+------------------------------------------------+Next we’ll need a router and initially we’ll use the default L3_agent configuration (which is also what we configured earlier in the neutron configuration section).

neutron router-create router1Example output:

+-----------------------+--------------------------------------+

| Field | Value |

+-----------------------+--------------------------------------+

| admin_state_up | True |

| distributed | False |

| external_gateway_info | |

| ha | False |

| id | e69f63a4-c7da-4fe6-81d7-9dc4f80ad94d |

| name | router1 |

| routes | |

| status | ACTIVE |

| tenant_id | 436a8b4b10ab4bcca035eda7a7520838 |

+-----------------------+--------------------------------------+Let’s attach ports to the private/internal network, as this is where VMs will be attached.

neutron router-interface-add router1 private-subnetExample output:

Added interface f4dd3d9e-09a9-4bcf-9148-cd9b2b4052b9 to router router1Then we’ll associate our “magical” external/public network to our “magical” router gateway port, enabling the subnet associated to the gateway port to present Floating IP addresses:

neutron router-gateway-set router1 publicExample output:

Set gateway for router router1